Meta has announced that it will automatically restrict the content visible to teen Instagram and Facebook accounts. Starting today, these accounts will be limited from accessing harmful content, including posts related to self-harm, graphic violence, and eating disorders.

Meta added that it will start to hide more types of content for teens on Instagram and Facebook, in line with expert guidance.

Furthermore, the company will also start prompting teens to update their privacy settings on Instagram in a single tap with new notifications.

“We want teens to have safe, age-appropriate experiences on our apps. We’ve developed more than 30 tools and resources to support teens and their parents, and we’ve spent over a decade developing policies and technology to address content that breaks our rules or could be seen as sensitive,” Meta said in a statement.

“Today, we’re announcing additional protections that are focused on the types of content teens see on Instagram and Facebook.”

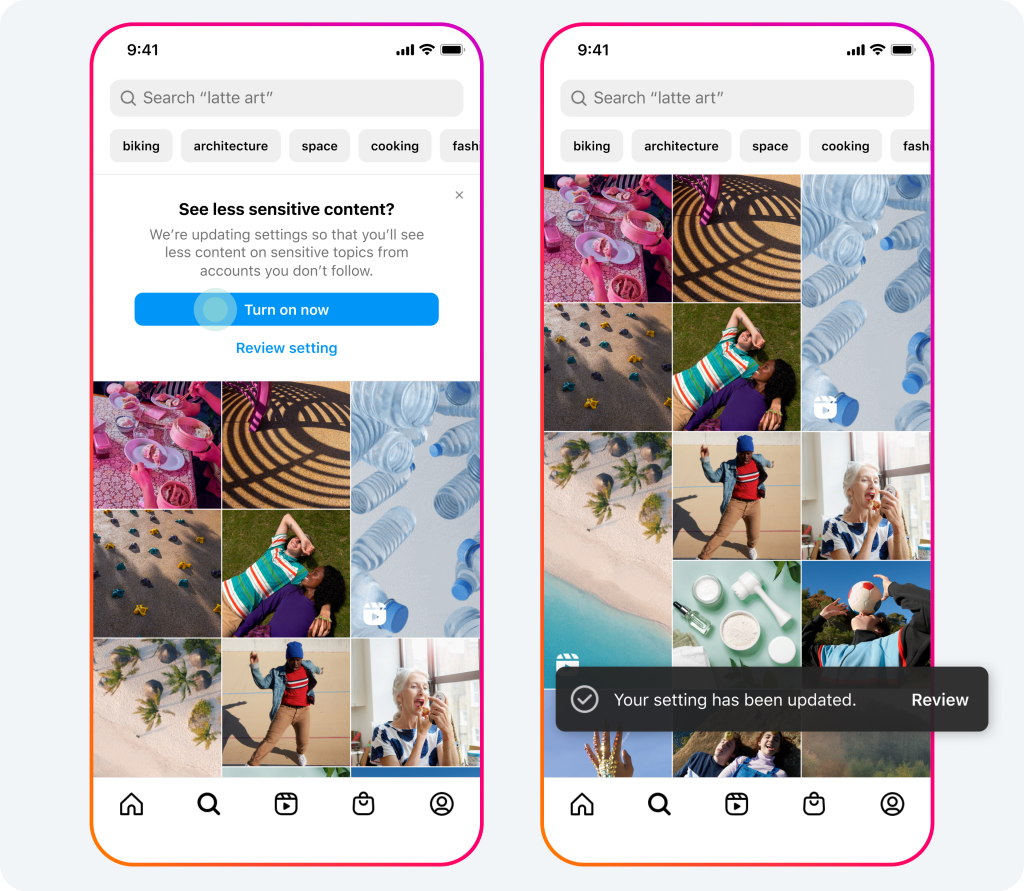

Updates to Instagram’s and Facebook’s Content Recommendation Settings for Teens

“We’re automatically placing teens into the most restrictive content control setting on Instagram and Facebook. We already apply this setting for new teens when they join Instagram and Facebook, and are now expanding it to teens who are already using these apps,” says Meta.

“Our content recommendation controls – known as “Sensitive Content Control” on Instagram and “Reduce” on Facebook – make it more difficult for people to come across potentially sensitive content or accounts in places like Search and Explore.

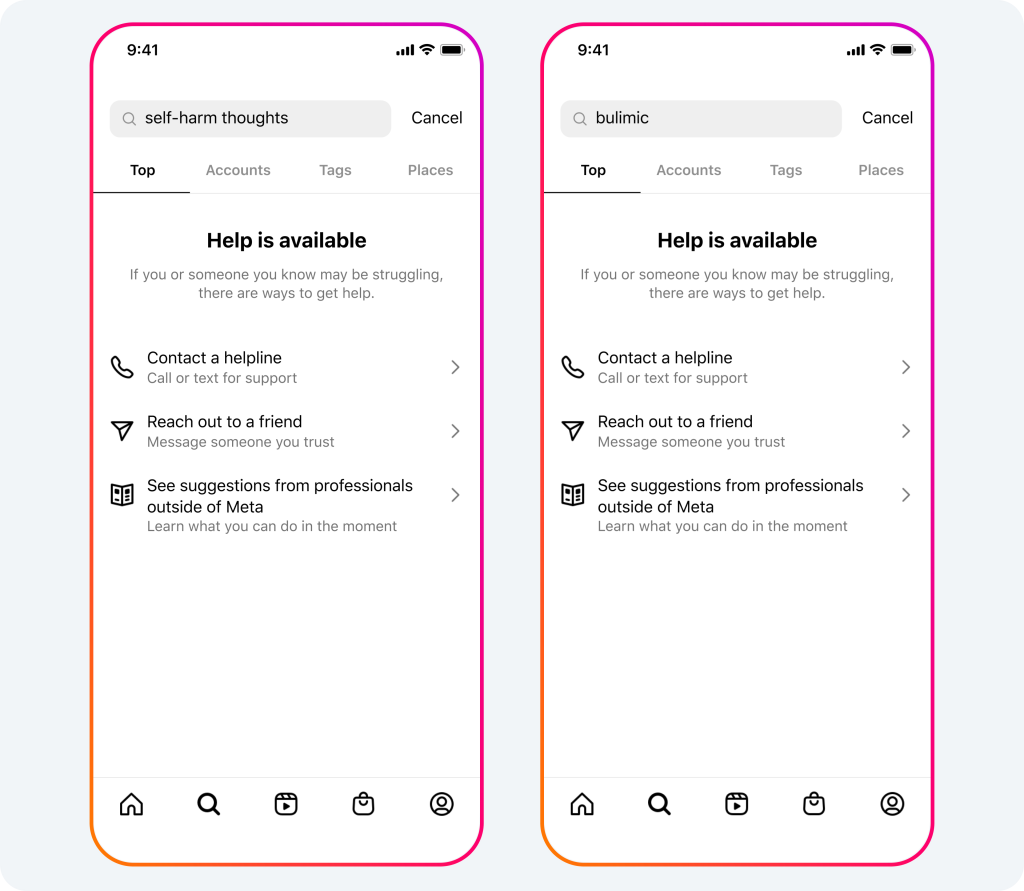

Hiding More Results in Instagram Search Related to Suicide, Self-Harm and Eating Disorders

The company notes that while it allow people to share content discussing their own struggles with suicide, self-harm and eating disorders, its policy is not to recommend this content and it has been focusing on ways to make it harder to find.

“Now, when people search for terms related to suicide, self-harm and eating disorders, we’ll start hiding these related results and will direct them to expert resources for help. We already hide results for suicide and self harm search terms that inherently break our rules and we’re extending this protection to include more terms. This update will roll out for everyone over the coming weeks.”